Sales managers track call quality at scale by using scorecards, structured sampling, coaching workflows, conversation analytics, and outcome-based reporting. The goal is not to review every call. The goal is to review the right calls, measure quality consistently, spot patterns early, and improve rep performance across a larger team.

A few call reviews can help a small team. A growing sales team needs a system. Once call volume expands across reps, regions, shifts, call types, and lead sources, call quality can no longer depend on random spot checks or manager instinct alone.

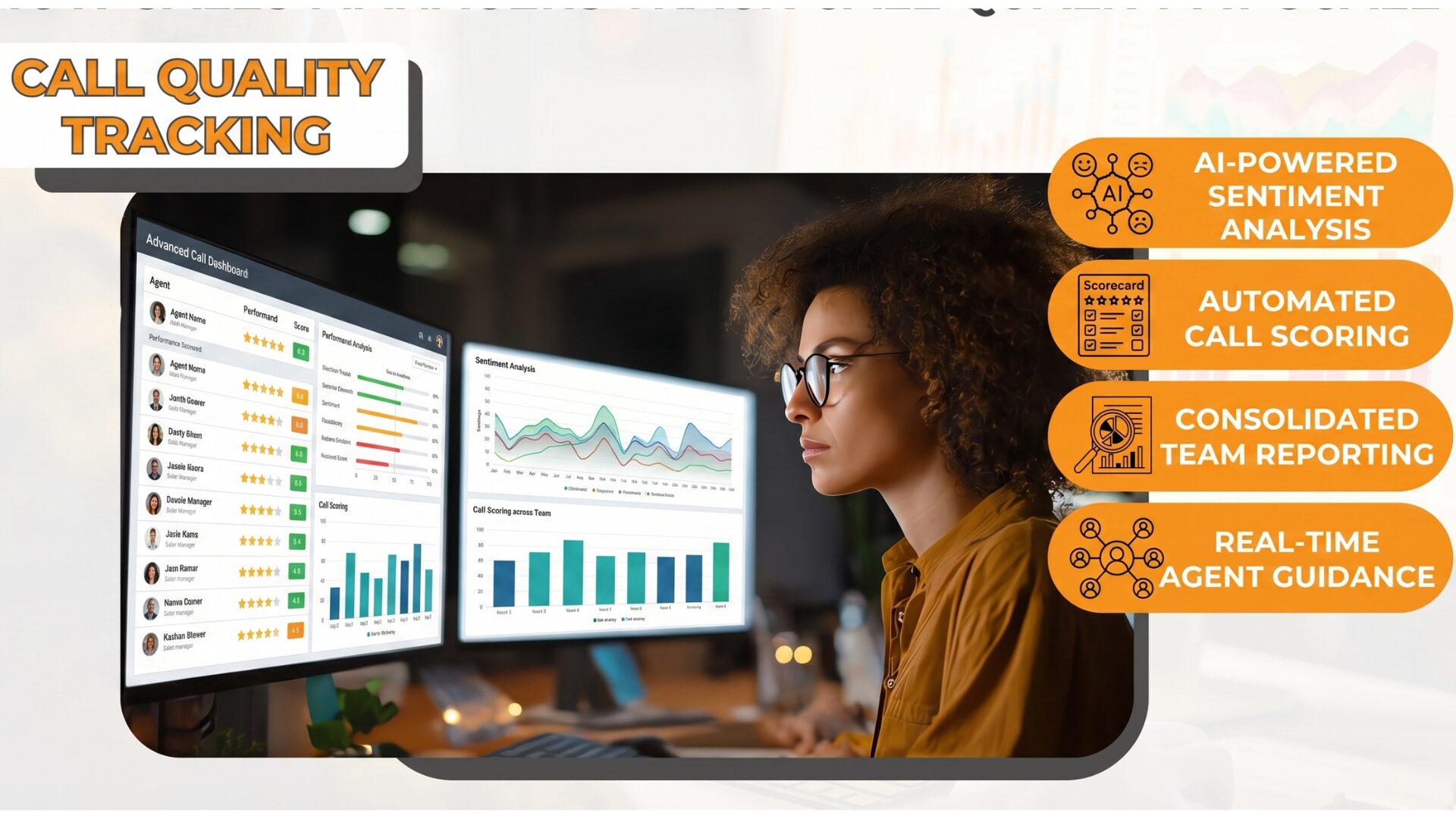

That is where structured call quality tracking matters. Sales managers need a repeatable way to review conversations, score performance, coach reps, track compliance in recorded sales calls, and connect call behavior to pipeline and revenue. Strong call tracking and analytics make that process more manageable by combining recordings, searchable transcripts, outcome visibility, and pattern detection in one workflow. AvidTrak’s current platform supports this kind of review through call recording, AI-powered transcription, conversation analytics, and live monitoring tools.

Why call quality becomes harder to manage at scale

In a small team, a sales manager can hear more calls directly, step in faster, and coach reps in the moment. That becomes much harder once the team grows across more reps, more calls, more managers, and more sales motions.

Call quality usually becomes harder to manage when the business has:

- more reps

- more calls per rep

- multiple products or territories

- inbound and outbound teams

- remote or hybrid staff

- different lead sources

- multiple managers scoring calls differently

At that point, call quality usually breaks down in three ways.

1. Scoring becomes inconsistent

One manager is stricter than another. One rep gets detailed feedback while another gets almost none. Without shared scorecards and calibration, call reviews start to feel subjective.

2. Volume outpaces manual review

As call volume grows, managers can no longer rely on random spot checks. There are simply too many calls to review without a structured sampling process and stronger call tracking and analytics.

3. Review does not turn into action

Many teams record calls but do not turn those recordings into patterns, coaching plans, compliance checks, or process improvements. That weakens the value of the entire review process.

This is why sales managers need a system, not just recordings. A scalable call quality program should make it easier to review the right calls, score them consistently, coach clearly, and track whether quality is improving over time. It should also support how to track compliance in recorded sales calls so managers are not only reviewing performance, but also checking whether required steps, disclosures, and process standards are being followed.

What sales managers are actually trying to measure

Call quality is not just about whether a rep sounds polished or friendly. At scale, sales managers need to measure whether the rep is doing the things that move the conversation toward a real business outcome.

That usually includes whether the rep:

- opens the call clearly

- confirms context and need

- asks strong discovery questions

- listens instead of rushing

- handles objections effectively

- explains value in a way that fits the prospect

- sets a clear next step

- updates the CRM or required records correctly

- follows the process without sounding scripted

A high-quality call should improve the chance of a meaningful result. Depending on the team, that result may be a booked meeting, a qualified lead, a quote, a demo, or a sale.

This is also where best practices for call tracking and analytics in sales become more useful. Managers are not only reviewing rep behavior. They are also trying to understand which behaviors connect to better call outcomes, which patterns show up across teams, and where coaching should focus first.

In teams with recorded conversations, this is also the point where managers begin to see how to track compliance in recorded sales calls more clearly. A strong review process should not only check selling behavior. It should also confirm whether the required steps, disclosures, documentation, or intake standards were followed during the call.

1 . Start with a clear definition of a good call

This is the first step many teams skip.

If managers and reps do not agree on what a good call looks like, call quality turns into opinion. One manager may care most about tone. Another may care most about discovery depth. Another may only care whether the rep booked the next step.

Sales managers need a written definition of a good call based on the sales motion.

For example, a good inbound B2B qualification call may include:

- the rep confirms the lead source or reason for calling

- asks questions about need, urgency, fit, and timeline

- explains the right solution clearly

- confirms the next step before ending

- logs the outcome correctly

A good outbound prospecting call may look different:

- the rep earns the first 30 seconds

- the rep speaks to a relevant pain point

- the rep avoids a long pitch

- the rep handles the first objection without losing control

- the rep secures a meeting or a clear follow-up path

The scorecard should follow the sales motion, not the other way around.

2 . Build a call scorecard that managers can actually use

A scorecard is the backbone of call quality tracking at scale. It keeps reviews consistent and makes coaching easier.

A good scorecard is short enough to use often and specific enough to be useful. Most teams do better with 6 to 10 scoring areas than with 25 small items.

A practical scorecard often includes these categories:

1. Opening

Did the rep start with clarity and control? Did they set the tone quickly?

2. Discovery

Did the rep ask useful questions? Did they uncover the real need?

3. Listening

Did the rep respond to what the prospect actually said, or just follow a script?

4. Value Communication

Did the rep explain the offer in a way that matched the prospect’s problem?

5. Objection Handling

Did the rep stay calm, answer clearly, and move the conversation forward?

6. Next Step Control

Did the rep end with a clear next action?

7. Compliance or Process

Did the rep follow required steps, disclosures, and CRM updates?

Each item can be scored on a simple scale such as:

- 0 = missed

- 1 = partially done

- 2 = done well

That simple model works better than a vague 1-to-10 score in many teams because it forces managers to judge observable behavior.

3 . Do not score every call the same way

This is one of the biggest mistakes in large teams.

A discovery call, a follow-up call, and a closing call should not be judged by the same scorecard. The job of the call is different.

Sales managers should create scorecards by call type, such as:

- inbound lead qualification

- outbound cold call

- demo booking call

- follow-up call

- pricing or proposal call

- retention or renewal call

That keeps scoring aligned with the purpose of the conversation.

For example, if a rep handles a short inbound call from someone who only wants to check availability, the manager should not score that call as if it were a long discovery conversation. The call should be judged by whether the rep handled that moment well, not by whether they forced steps that did not fit.

This is one of the best practices for call tracking and analytics in sales because it keeps reviews tied to the real sales motion. It also helps managers see patterns more accurately across different call types instead of blending everything into one quality score. In teams reviewing recordings, this approach also supports how to track compliance in recorded sales calls by making it easier to judge required behavior based on the actual type of conversation being reviewed.

4 . Use sampling, not full review

No manager can review every call in a growing team. Trying to do that usually leads to burnout or shallow reviews.

The better model is structured sampling.

A sales manager might review:

- 3 to 5 calls per rep per week in a smaller team

- 2 to 3 calls per rep per week in a larger team

- extra calls for new hires, low performers, or reps on coaching plans

- a balanced mix of call types, lead sources, and outcomes

This helps managers see enough to coach well without creating an impossible workload.

Sampling should include:

- wins

- losses

- no-decisions

- short calls

- long calls

- calls from strong reps

- calls from struggling reps

Only reviewing bad calls creates a distorted picture. Strong calls teach just as much.

5 . Combine manual reviews with conversation intelligence

Once volume grows, manual review alone is not enough.

Sales managers should use conversation intelligence tools and call analytics to surface patterns faster. That includes:

- recordings

- searchable transcripts

- talk-to-listen ratio

- interruption rate

- hold time

- silence rate

- keyword and phrase tracking

- sentiment tracking

- objection mentions

- outcome tagging

These tools do not replace human judgment. They help managers know where to look.

For example:

- If pricing objections spike across one segment, managers can review those calls first.

- If one rep has an unusually high monologue rate, managers can coach on discovery and listening.

- If calls mentioning a competitor have lower conversion, managers can build rebuttal training around that theme.

This is how managers review smarter, not just more.

6 . Create a review cadence that fits the team

Call quality tracking only works if it becomes part of a routine.

A workable cadence often looks like this:

Daily

Managers review urgent issues, flagged calls, missed opportunities, or compliance concerns.

Weekly

Managers score a set number of calls per rep, identify patterns, and run coaching sessions.

Monthly

Managers review team-wide trends, compare call quality against pipeline results, and adjust training priorities.

Quarterly

Managers update scorecards, review whether scoring still matches the sales motion, and align quality standards across managers.

Without cadence, call quality work becomes reactive and uneven.

7. Track trends, not just individual calls

A single call matters for coaching. Trends matter for management.

Sales managers should track:

- average quality score by rep

- average quality score by team

- score movement over time

- weakest scorecard areas

- strongest scorecard areas

- quality score by lead source

- quality score by call type

- coaching completion rate

- change in close rate after coaching

This helps answer questions such as:

- Which reps need support right now?

- Which skill is hurting the whole team?

- Which lead sources are producing weak-fit calls?

- Which managers are coaching effectively?

- Is call quality improving after training?

That is where call review becomes a management tool rather than a listening exercise.

8 . Tie quality tracking to business outcomes

A score by itself is not enough. Sales managers should connect call quality to real outcomes.

The best call quality programs compare quality scores with:

- meeting booked rate

- qualified lead rate

- quote rate

- demo completion rate

- opportunity creation rate

- close rate

- average deal value

- retention or repeat purchase, if relevant

This matters because not every scoring item carries the same business weight.

For example, a SaaS team may find that reps with stronger discovery scores create more qualified pipeline, even if their calls are not the smoothest. A home services team may find that next-step control is the biggest predictor of booked appointments. A legal intake team may find that empathy and intake accuracy matter most.

Once managers see which call behaviors connect to results, coaching gets sharper.

9. Make calibration a formal process

When more than one manager scores calls, scoring drift becomes a real problem.

Calibration means managers listen to the same calls, score them independently, compare scores, and agree on what “good,” “partial,” and “missed” look like.

Without calibration:

- rep trust drops

- coaching becomes uneven

- quality reporting becomes unreliable

- performance conversations feel subjective

A simple calibration process works well:

- Pick 3 to 5 calls each month.

- Have managers score them independently.

- Compare where scores differ.

- Agree on scoring standards.

- Update examples inside the scorecard guide.

This keeps the program fair.

10. Coach from patterns, not from random criticism

Call quality tracking should lead to better coaching, not more criticism.

The best coaching is:

- specific

- tied to real examples

- focused on one or two skills at a time

- repeated until the behavior improves

Weak coaching says, “You need to sound more confident.”

Strong coaching says, “On your last three pricing calls, you answered too early without asking why price was a concern. This week, pause first, ask one clarifying question, then respond.”

Managers should bring:

- one strong example

- one missed example

- one practice goal

- one follow-up review point

That keeps coaching grounded and measurable.

11. Use call libraries to train faster

Once managers review calls regularly, they should start building a call library.

This is one of the most useful assets in a scaled sales team.

A call library can include:

- strong discovery calls

- strong objection-handling examples

- weak calls with teachable moments

- strong calls by product or vertical

- strong calls by stage

- calls that show common mistakes

- competitor mention examples

- pricing discussion examples

This helps new hires ramp faster and gives managers ready material for team training.

For example, instead of telling a rep to “sound more consultative,” a manager can share two strong calls where a top rep handled the same situation well.

12. Use alerts and flags for high-risk situations

At scale, managers should not wait until weekly review to catch major issues.

Set alerts or flagging rules for calls that may need immediate review, such as:

- very short inbound calls from paid campaigns

- repeated missed objections

- negative sentiment

- calls with legal or compliance risk

- calls with high buyer intent but no next step

- repeated mentions of competitors

- calls marked as lost after strong initial interest

This helps managers step in before problems spread.

A good example is a multi-location admissions team. If several calls in one region mention “no one got back to me” or “I am still waiting,” the issue may not be rep skill alone. It may be a broken follow-up process. Flags help surface that fast.

13 . Separate rep quality from lead quality

This is critical.

Sometimes weak calls reflect poor rep performance. Sometimes they reflect poor-fit leads, bad routing, weak targeting, or unclear offers.

Sales managers should compare call quality by:

- campaign

- lead source

- territory

- product line

- team

- call type

For example, if strong reps suddenly show lower quality scores on one paid campaign, the issue may be lead fit. If one territory has more rushed or frustrated calls, the issue may be volume pressure or staffing. If one product line creates repeated confusion, the messaging may be weak.

Good managers do not blame reps for system problems.

14 . Build a simple quality dashboard

A sales manager does not need a complicated dashboard at the start. They need a useful one.

A practical call quality dashboard can include:

- calls reviewed this week

- score by rep

- team average score

- lowest-scoring skill area

- highest-scoring skill area

- flagged calls

- coaching sessions completed

- score change over 30 days

- quality score vs conversion rate

That view gives managers enough to act quickly.

What high-performing sales managers do differently

Managers who track call quality well at scale usually do a few things consistently.

- Define what good looks like in writing.

- Use a small set of scorecard categories instead of a bloated form.

- Sample calls on purpose.

- Review both strong and weak calls.

- Connect call quality to pipeline and revenue.

- Calibrate scoring across managers.

- Coach one behavior at a time.

- Use transcripts and conversation data to find patterns faster.

- Treat call quality as a process, not as a side task.

Examples of how this works in real teams

Example 1: SaaS sales team

A manager sees that demos booked per rep are flat even though call volume is strong. After reviewing transcripts, the manager finds that reps are rushing discovery and pitching too early. The scorecard shows low discovery scores across the team. Coaching focuses on question structure and listening. Demo quality improves over the next month.

Example 2: Home services call center

A regional manager sees lower booking rates in one market. Call reviews show that reps are not confirming appointment windows clearly and callers leave uncertain. The issue is not lead volume. It is next-step control. A revised script and coaching raise booking rates.

Example 3: B2B outbound team

A team has decent connect rates but weak meeting conversion. Call reviews show that reps are talking too much in the opening minute. Managers begin tracking the talk-to-listen ratio and first-minute structure. Reps start earning more second-stage conversations.

Example 4: Legal intake team

One office appears to underperform on signed cases. Review shows that the intake team handles emotional callers too quickly and misses key intake facts. Managers add empathy and intake completeness to the scorecard. Better call quality leads to stronger case screening.

How sales managers know the system is working

A good call quality program should produce visible changes such as:

- more consistent scoring across managers

- clearer coaching sessions

- faster improvement in weak skill areas

- better conversion at key call stages

- fewer repeated mistakes

- stronger onboarding for new reps

- more confidence in performance reviews

- clearer links between call behavior and revenue results

That is the real test. The program should improve performance, not just create more forms.

Final thoughts

Sales managers do not need to listen to every call to manage call quality well at scale. They need a structured way to define quality, review the right calls, spot patterns, coach clearly, and tie conversation quality to business outcomes.

When that system is in place, call review stops being random and starts becoming a repeatable part of sales management. That is how teams improve quality across more reps, more calls, and more markets without losing control.

FAQs

-

How many calls should a sales manager review per rep?

There is no single number that fits every team, but many managers review 2 to 5 calls per rep per week depending on team size, call volume, and rep performance. New hires and struggling reps usually need more review coverage.

-

What is the best way to score call quality?

The best method is a short scorecard with clear, observable behaviors tied to the sales motion. Most teams do better with 6 to 10 categories than with a long checklist.

-

Can AI replace manual call review?

No. AI helps managers find patterns, flag calls, and speed up review, but managers still need to judge context, coaching needs, and business relevance.

-

What should sales managers track besides the score?

They should also track quality trends by rep, team, call type, lead source, and outcome. The strongest programs connect quality scores to meetings booked, qualified leads, opportunities, and close rates.

-

Why do managers need calibration?

Calibration keeps scoring fair across managers. Without it, reps may get different scores for the same behavior, which weakens trust in the whole program.